A Chinese AI DeepSeek, has shaken the world. American tech companies and the US stock market have been shocked to such an extent that they have never imagined in their dreams. It was actually on 20th January 2025.

A Chinese research lab launched its AI chatbot. Its name was DeepSeek R1. Along with this, they also published their research paper.

In which they said that their chatbot is better than the world’s most advanced chatbots in many benchmarks like Maths and Reasoning. That is, OpenAI’s chat GPT-O1 model, Meta’s Lama, Google’s Gemini Advanced, it left everyone behind in one go. DeepSeek is the best in performance, more efficient and it takes the least time and money to make it.

But the most amazing thing is that using it is completely free of cost for you and for all of us. On the other hand, OpenAI is charging $200 per month to use its chat GPT-O1 pro model. Not only this, the cost of training it is reported to be only $5.6 million.

While other American companies, whether it is OpenAI, Meta or Google, are spending billions of dollars to make their artificial intelligence models. Coincidentally, just a while ago, when OpenAI’s founder Sam Altman was asked, that can Indians also make foundational AI models like chat GPT in India? So he answered very arrogantly that no one can do this except us. You can try but it will be hopeless.

Today, Sam might be feeling hopeless because just a week after the launch of DeepSeek, DeepSeek becomes the most downloaded app on the App Store and Google Play Store in America. It also leaves chat GPT behind. The next day, it becomes the number one app on the App Store in India and other countries.

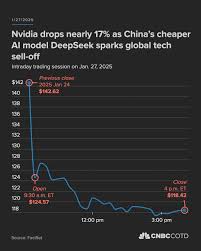

And by the time of 27th January, America’s financial markets are in turmoil. $1 trillion of the US tech index. Before the launch of DeepSeek, the most valuable company in the world, NVIDIA, had a valuation of $3.5 trillion.

But in just one day, it fell to $2.9 trillion. This company makes computer chips and specializes in making those computer chips which can be used to train and operate AI. NVIDIA’s shares fall by 17% in just one day and its valuation falls to $589 billion.

In the history of today, the biggest loss to any company in just one day. The benchmark of US tech companies, Nasdaq, also falls by 3.1%. But why did NVIDIA suffer such a big loss? There is a very interesting reason behind this which we will talk about later. But before that, let’s know the story behind DeepSeek.

Where did this AI come from and why did it shock the world? The credit for making DeepSeek goes to a 40-year-old Chinese entrepreneur, Liang Wenfeng.

He has made very few public appearances till date. And he keeps his identity quite hidden. Much information about his life and history is not available to the public.

But we do know that in 2015, he made a hedge fund called High Flyer. He used mathematics and AI to make investments. In 2019, he founded High Flyer AI to research on artificial intelligence algorithms.

But in May 2023, he used his money, which he was earning from his hedge fund, to start a side project to make an AI model. Liang said that he wanted to make his own AI model which was better than all the AI models in the world. And the reason behind it was scientific curiosity.

Earning profit, earning money is not his reason. To make this AI model, when he started to gather his research team, he didn’t hire engineers. Instead of engineers, he hired PhD students from China’s top universities.

To train his AI model, the world’s most difficult questions were used. And in just two years, he spent a few million dollars and launched the DeepSeek R1 model. It’s super impressive.

We should take the development out of China very, very seriously. DeepSeek just taught us that the answer is less than people thought. You don’t need as much cash as we once thought.

Only 200 people were involved in making it. And 95% of the people were under the age of 30. Compare it to a company like OpenAI which has hired more than 3,500 employees.

Today, DeepSeek is China’s only AI firm in which the money of tech giants like Baidu, Alibaba and ByteDance has not been invested. It’s quite interesting to understand its architecture. DeepSeek is a chain of thought model.

Just like ChatGPT O1 model, OpenAI is also a chain of thought model. The naming here can be quite confusing. Because ChatGPT has named their models with very strange names.

They are quite similar to each other. ChatGPT’s first publicly launched model was GPT-3.

It was launched in November 2022. And then in March 2023, they launched GPT-4. Which was much better than GPT-3.

Then in May 2024, GPT-4O was launched. Which was a multi-modal version of GPT-4. In this, you could not only talk to it through text.

But you could also talk to it by speaking. You could also send it photos. It could understand the photos.

And it could also generate photos for you. This is where the name Multimodal comes from. Then on 12th September 2024, OpenAI launched its O1 model.

This was the first model which was based on a chain of thought. When you asked a question to it, it would think while answering. The meaning of thinking here is that whenever it generated an answer, it would counter-question you before sending it to you.

Should I give this answer to the user? Or can there be a better answer than this? It would look at one answer from many angles. And then it would give you the answer. This is called a chain of thought.

And it is an attempt to copy the reasoning process of humans. For example, if you ask ChatGPT 4.0, 9.11 and 9.9, which one is bigger? It gives you the answer without thinking. 9.11 is bigger than 9.9. Which is the wrong answer.

But when you ask the same thing to ChatGPT O1, it would think. Before giving you its answer, it would counter-question itself. Is this the right answer? In my case, it kept thinking for 18 seconds.

But the answer was right. 9.9 is actually bigger. If you ask the same thing to DeepSeek, you will get the right answer.

The old AI models had a huge shortcoming. But the chain of thought process has improved this shortcoming. This is why models like O1 and DeepSeek are called Advanced Reasoning Models.

It is much better in answering logically. Another good thing about DeepSeek is that it shows you in more detail what it is thinking step-by-step before answering.

It starts thinking of something that makes humans unique. But then it counter-questions itself.

The user might need something original. Maybe I should think about how these traits interact with each other in unexpected ways. You will notice that it is trying to answer this question from different angles. You can keep looking at the screen to see how long it is thinking and how many possibilities it is considering. Before getting the actual answer.

After a lot of thinking, the answer is that humans are the only species that uses storytelling as a cognitive exoskeleton. But when this question was asked by ChatGPT-4, the answer came instantly. It didn’t think much.

It told what came first. Similarly, if you ask these old chatbots to select a random number, they will immediately select a number for you. But if you ask the chain of thought models to do this, they will keep thinking for a long time.

After the release of DeepSeek, the biggest loss of DeepSeek is its censorship. If you ask any critical question like what happened in 1989 in Tiananmen Square in China? What are the biggest criticisms of Xi Jinping? Is Taiwan an independent country? Why is China being criticized for human rights abuses? DeepSeek has only one answer to all these questions. Sorry.

this type of question. Instead, we talk about math, coding and logic problems. But with this DeepSeek, if you ask any other world leader to criticize, be it Joe Biden, Donald Trump or Putin, this answer is given in great detail.

Actually, all the AI models that are made in China, around 70,000 questions are asked as a test. To check whether it gives safe answers to politically controversial questions or not. And after this, all these Chinese AI models don’t answer such questions.

China’s Chief Internet Regulator, Cyberspace Administration of China tests it. Some people say that it should be boycotted completely because only Chinese propaganda is filled in its answers. But the important point here is that DeepSeek is an open source software.

Its code is publicly available. Anyone can download it locally. One way is that you use DeepSeek on the App Store or Google Play Store by downloading its app.

But the second way is that you download its entire code and locally on your system, on your computer system, you run this AI. By doing this, you can change its code and modify it yourself according to your use case. Other American companies have already started doing this.

Like Perplexity AI. They downloaded DeepSeek R1 model and removed all the censorship. And in Perplexity, you can use R1 model as well.

Microsoft did the same thing. Look at this news article of 29th January. DeepSeek R1 model is available And they have announced that you can use this model in Copilot as well.

And because it is an open source, despite being Chinese, DeepSeek is being seen with great trust. People have made fun of it on American companies. Companies like OpenAI who had kept their name open.

Because in the beginning, when these companies were formed, they said that they will work for the public. They will keep everything open source. But in reality, they didn’t do that.

While trolling, Elon Musk has often called OpenAI as Closed AI. And today, Chinese AI has become more open than OpenAI.

Among the top AI chatbots that are present today, OpenAI’s chat GPT, an Anthropic company, its Cloud, Google’s Gemini, Alibaba, another Chinese company, its Quen 2.5, Meta, a Facebook company, its Lama model. If all these are compared with DeepSeek, what is the result? According to Artificial Analysis, in the case of coding, DeepSeek is at the top, then chat GPT, and then Claude, then Quen 2.5, and finally Lama. In Quantitative Reasoning, DeepSeek is at the top, then chat GPT, then Quen 2.5. In Scientific Reasoning and Knowledge, chat GPT is at the top, then DeepSeek, then Claude, and finally Lama.

When PCMag tested AI chatbots, they found that DeepSeek is the best in News Knowledge. In Calculations, chat GPT and DeepSeek are equal. In Writing Poems, chat GPT is good.

In Making Tables, chat GPT is good. But in Solving Riddles, DeepSeek is good. In the case of Quality, the website of Artificial Analysis has rated chat GPT as 90 and DeepSeek R1 as 89.

But a big disadvantage of DeepSeek is its response time. Look at this graph, it is a latency graph. How many seconds did it take to get the answer O1 takes 31.1 seconds.

And DeepSeek takes 71.2 seconds. And this response time is actually increasing in recent days. Because DeepSeek has become so popular in the world that everyone wants to download and use it.

Because of this, there is a problem in their servers. They are busy. And because of this, it takes more time to give its answer.

So this is a big disadvantage. Now if we compare chat GPT O1 and DeepSeek with each other, then DeepSeek is more innovative in comparison to O1. One example of this can be the mixture of experts method.

When something is asked from chat GPT O1, it works like the same model. Your question, whoever it is, chat GPT is the engineer for you, the doctor, the lawyer. But DeepSeek has divided itself into many specialized models.

In DeepSeek, the engineer is different, the doctor is different and the lawyer is different. Looking at your question, only the engineer or only the doctor will be called. What is happening with this? Firstly, the time required for data transfer is less.

And secondly, the parameters that AI has to keep active are also decreasing. Traditional models always keep 1.8 trillion parameters active. DeepSeek has 671 billion parameters but at one time, only 37 billion parameters are active.

The rest of the parameters are active only which is increasing its efficiency a lot and the cost is decreasing a lot. What are these parameters and how do they work? This is a long story.

Another point of criticism that is being done against DeepSeek is that they have used all their AI models from OpenAI. OpenAI has said that it has got evidence that DeepSeek has used its proprietary models to train itself.

They say that they have seen the evidence of distillation which can use the output of big models to improve the performance of small AI models which can give to small models at a low cost. Someone tweeted on this and said that the house of the thief has been robbed. Actually, in a way, chat GPT has stolen things from the entire internet to make itself.

Many books have been used without the permission to train their models. This is the reason that 17 big writers including the writer of Game of Thrones, George RR Martin were also included. In September 2023, they filed a case for copyright infringement New York Times newspaper has also filed a case on OpenAI and Microsoft.

Apart from this, 8 newspapers of America and many news outlets have also filed a case on OpenAI. This is the reason that such memes have become viral where fish is being fished. And OpenAI keeps the stuff in its bucket and DeepSea comes from behind and starts fishing from the same bucket.

As users, this is a good thing for us but at the country and company level, AI wars are now raging here. In 2022, the American government implemented export control so that the computer chips required for AI can’t be used by other countries. Especially, Chinese AI companies couldn’t do it.

In this, NVIDIA’s H100 chips were included which Chinese companies couldn’t buy. This was a problem for DeepSea because they had to use NVIDIA’s old computer chips to train their AI model. America had tried from its side that no other country couldn’t make these foundational AI models like them because they wouldn’t have those computer chips which would be needed to train AI models.

But the people working in DeepSea were forced to innovate. They made a software which takes very less resources and works more efficiently This is the reason that NVIDIA’s stock fell the lowest. Because a few months ago, companies like Meta, OpenAI and Google were saying that if we want to scale AI to a higher level, then we need more chips.

More energy and money would be needed. But DeepSea did all this and showed that India uses less money, energy and useless computer chips. We should see it as a motivation.

If it can be done then it can be done in India as well. Indians also have the capability to make such innovations. We just need to focus and put our energy in the right place.

On a personal level, take advantage of this opportunity to upskill yourself in the field of AI. If you haven’t started yet, then you’re not late. This is the right time to learn AI and to increase your productivity and efficiency through AI.

Because there are no two ways in this that those who ignore this technology will be left behind in the future. It is difficult how big an impact AI will have on the world in every sector and every field. You can find the link to my AI course in the description and pinned comment.

Leave a Reply